Getty Images

In response to recently enacted state legislation in Iowa, administrators are removing banned books from Mason City school libraries, and officials are using ChatGPT to help them pick the books, according to The Gazette and Popular Science.

The new law behind the ban, signed by Governor Kim Reynolds, is part of a wave of educational reforms that Republican lawmakers believe are necessary to protect students from exposure to damaging and obscene materials. Specifically, Senate File 496 mandates that every book available to students in school libraries be “age appropriate” and devoid of any “descriptions or visual depictions of a sex act,” per Iowa Code 702.17.

But banning books is hard work, according to administrators, so they need to rely on machine intelligence to get it done within the three-month window mandated by the law. “It is simply not feasible to read every book and filter for these new requirements,” said Bridgette Exman, the assistant superintendent of the school district, in a statement quoted by The Gazette. “Therefore, we are using what we believe is a defensible process to identify books that should be removed from collections at the start of the 23-24 school year.”

The district shared its methodology: “Lists of commonly challenged books were compiled from several sources to create a master list of books that should be reviewed. The books on this master list were filtered for challenges related to sexual content. Each of these texts was reviewed using AI software to determine if it contains a depiction of a sex act. Based on this review, there are 19 texts that will be removed from our 7-12 school library collections and stored in the Administrative Center while we await further guidance or clarity. We also will have teachers review classroom library collections.”

Unfit for this purpose

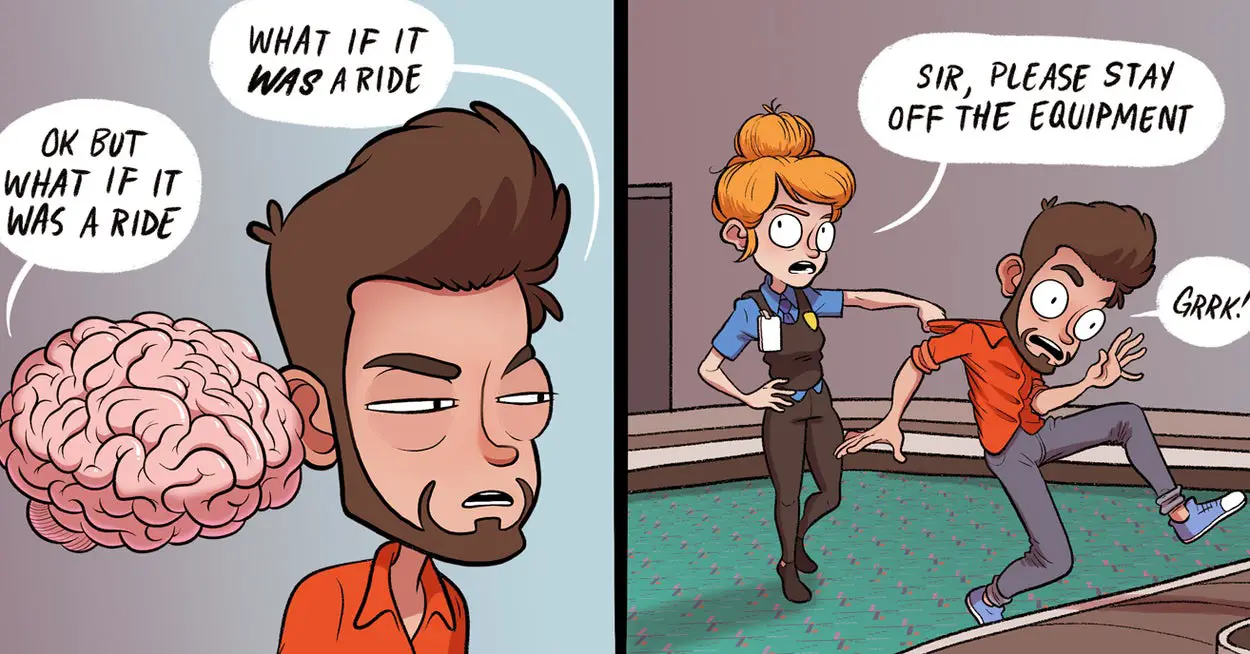

In the wake of ChatGPT’s release, it has been increasingly common to see the AI assistant stretched beyond its capabilities—and to read about its inaccurate outputs being accepted by humans due to automation bias, which is the tendency to place undue trust in machine decision-making. In this case, that bias is doubly convenient for administrators because they can pass responsibility for the decisions to the AI model. However, the machine is not equipped to make these kinds of decisions.

Large language models, such as those that power ChatGPT, are not oracles of infinite wisdom, and they make poor factual references. They are prone to confabulate information when it is not in their training data. Even when the data is present, their judgment should not serve as a substitute for a human—especially concerning matters of law, safety, or public health.

“This is the perfect example of a prompt to ChatGPT which is almost certain to produce convincing but utterly unreliable results,” Simon Willison, an AI researcher who often writes about large language models, told Ars. “The question of whether a book contains a description of depiction of a sex act can only be accurately answered by a model that has seen the full text of the book. But OpenAI won’t tell us what ChatGPT has been trained on, so we have no way of knowing if it’s seen the contents of the book in question or not.”

It’s highly unlikely that ChatGPT’s training data includes the entire text of each book under question, though the data may include references to discussions about the book’s content—if the book is famous enough—but that’s not an accurate source of information either.

“We can guess at how it might be able to answer the question, based on the swathes of the Internet that ChatGPT has seen,” Willison said. “But that lack of transparency leaves us working in the dark. Could it be confused by Internet fan fiction relating to the characters in the book? How about misleading reviews written online by people with a grudge against the author?”

Indeed, ChatGPT has proven to be unsuitable for this task even through cursory tests by others. Upon questioning ChatGPT about the books on the potential ban list, Popular Science found uneven results and some that did not apparently match the bans put in place.

Even if officials were to hypothetically feed the text of each book into the version of ChatGPT with the longest context window, the 32K token model (tokens are chunks of words), it would not likely be able to consider the entire text of most books at once, though it may be able to process it in chunks. Even if it did, one should not trust the result as reliable without verifying it—which would require a human to read the book anyway.

“There’s something ironic about people in charge of education not knowing enough to critically determine which books are good or bad to include in curriculum, only to outsource the decision to a system that can’t understand books and can’t critically think at all,” Dr. Margaret Mitchell, chief ethicist scientist at Hugging Face, told Ars.

Source link

Leave a Reply